A New Shift in AI Hardware

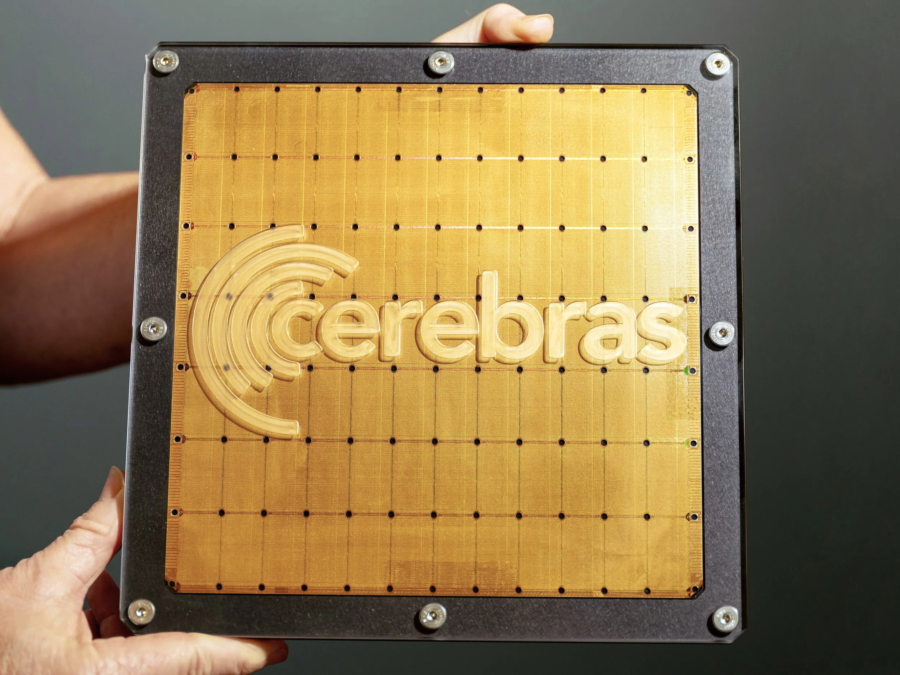

In recent years, artificial intelligence hardware has been dominated by one major technology: the GPU. Companies like NVIDIA have built entire ecosystems around graphics processing units that power everything from gaming to large-scale AI model training. But quietly, a very different kind of innovation has been developing in the background. It is called the wafer-scale engine, created by Cerebras Systems, and it has begun appearing more frequently in technology news because of its unusual design and its potential to reshape how computing systems are built.

Breaking Traditional Chip Design

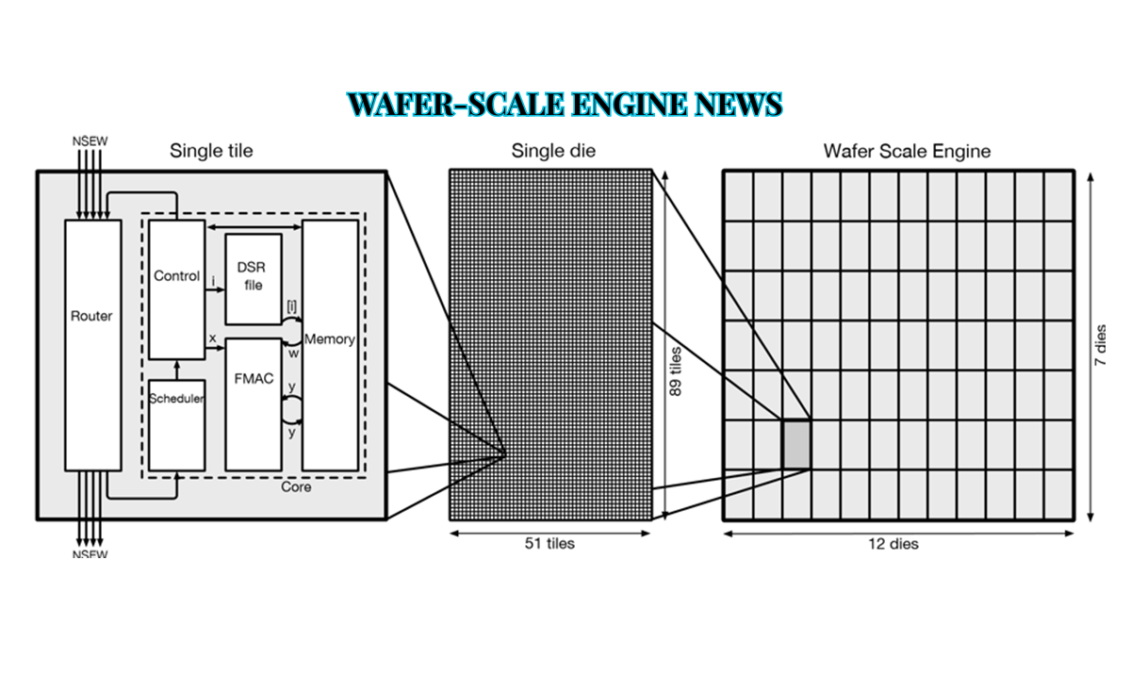

The idea behind wafer-scale engine news is not just about a new chip, but about a completely different way of thinking about hardware design. Instead of cutting silicon wafers into small individual chips like traditional processors, Cerebras decided to use the entire wafer as a single giant chip. This radical approach challenges decades of semiconductor manufacturing tradition and opens the door to extremely large-scale computing systems that behave differently from anything seen before.

How Chips Were Made Before

For decades, chip manufacturing has followed a predictable process. A silicon wafer is produced, then carefully sliced into smaller chips that become CPUs or GPUs. This method works well because it reduces risk; if one chip is defective, others can still be used. However, this approach also creates limitations, especially in modern artificial intelligence workloads where thousands of chips must work together. Data has to constantly move between chips, which introduces delays, energy loss, and complexity.

The Wafer-Scale Idea

Cerebras Systems challenged this entire system by asking a simple question: what if we never cut the wafer at all? The result of that question became the wafer-scale engine, often referred to as WSE. Instead of being a small chip, it is a full silicon wafer turned into a single computing system. This allows hundreds of thousands of processing cores to exist on one continuous piece of silicon, all communicating internally at extremely high speed.

| Topic | Short Information |

|---|---|

| Technology Name | Wafer-Scale Engine |

| Company | Cerebras Systems |

| What it is | A giant AI chip made from a full silicon wafer |

| Main Use | AI training and high-performance computing |

| Key Benefit | Very fast processing with massive parallel power |

| Main Competitor Area | GPU-based systems like NVIDIA |

Inside the WSE-2 Chip

The most advanced version, known as the WSE-2, contains more than 800,000 AI-optimized cores along with massive on-chip memory. Because all components are placed on a single wafer, communication between cores is nearly instantaneous compared to traditional multi-chip systems. In conventional setups, data must travel between separate GPUs or servers, but in a wafer-scale engine, everything happens internally within one massive processor.

Competing With GPU Giants

This design is one of the main reasons wafer-scale engine news has gained so much attention. It directly challenges the current dominance of GPU-based computing systems led by NVIDIA. Today’s large artificial intelligence models require enormous computational power, often spread across thousands of GPUs connected in clusters. While powerful, these clusters come with significant challenges such as slow inter-chip communication, high energy consumption, and complex infrastructure requirements.

A New Way to Scale AI Systems

Wafer-scale engines attempt to solve these problems by eliminating the need for multi-chip coordination. Instead of building larger and larger clusters, the idea is to build a single massive chip that can handle entire workloads internally. This shift has sparked ongoing discussions in the AI industry about whether future data centers will continue scaling through distributed GPU clusters or gradually move toward wafer-scale supercomputing systems.

How the Architecture Works

Inside a wafer-scale engine, the architecture is designed very differently from traditional processors. The wafer is divided into thousands of identical cores connected through a high-speed mesh network. Each core has its own memory access, and the system is designed to route around defective areas of silicon so that manufacturing imperfections do not break the entire chip. This is important because producing a full wafer without defects is extremely difficult and expensive, but the system is engineered to tolerate imperfections.

Faster Internal Communication

One of the most important innovations is the internal communication system. Unlike GPU clusters where data must travel through external cables or interconnects, wafer-scale engines allow data to move directly across the silicon wafer. This reduces latency and improves performance, especially in workloads like deep learning where constant data exchange is required. As a result, AI training tasks can run more efficiently in certain scenarios compared to traditional distributed systems.

Business Growth and Market Interest

Beyond the technical architecture, wafer-scale engine news has also been driven by the business growth and visibility of Cerebras Systems. The company has gained attention from investors and the broader technology industry as demand for AI computing power continues to rise rapidly. Reports have highlighted strong interest in alternative computing architectures as companies look for ways to reduce dependence on GPU clusters.

Cerebras has positioned itself not as a direct replacement for GPUs in all tasks, but as a specialized solution for large-scale AI training and scientific computing.

Real-World Applications

In real-world applications, wafer-scale engines are already being used in areas where extreme computational power is required. In artificial intelligence, they help train large language models by processing massive datasets efficiently. In scientific research, they are used for simulations in physics, chemistry, and climate modeling, where large-scale parallel computation is essential. In healthcare and genomics, wafer-scale systems help accelerate drug discovery and genetic analysis by processing complex biological data more quickly. Some cloud providers have even begun offering access to wafer-scale computing as a service, allowing organizations to rent this powerful hardware instead of building their own infrastructure.

Key Advantages

The advantages of wafer-scale engine news are significant in specific use cases. Because all cores exist on a single chip, communication between them is extremely fast. This enables massive parallel processing, where hundreds of thousands of operations can run simultaneously. It also reduces system complexity because organizations do not need to manage large clusters of separate machines. In addition, reduced inter-chip communication can lead to better energy efficiency for certain workloads.

Technical Challenges

However, despite these advantages, the technology also faces serious limitations. Manufacturing a full wafer-scale chip is extremely complex, and even small defects can increase production costs. These systems are also very expensive, meaning they are currently limited to enterprise and research environments. Additionally, while wafer-scale engine news perform well in AI training, they are not designed to replace GPUs in every scenario, especially in consumer applications like gaming or general-purpose computing. Heat management is another challenge, as such large chips generate significant thermal output and require advanced cooling systems.

Competing With NVIDIA’s Ecosystem

The biggest question in wafer-scale engine news is how this technology competes with the established GPU ecosystem, particularly the dominance of NVIDIA. NVIDIA has built a powerful advantage through its hardware, software ecosystem, and widespread industry adoption. Its CUDA platform is deeply integrated into AI development workflows, making it difficult for new architectures to replace GPUs entirely.

Wafer-scale engines, on the other hand, focus on a different strategy. Instead of replacing GPUs across all workloads, they aim to dominate specific high-performance AI training and research tasks where their architecture provides clear benefits.

Coexistence Instead of Replacement

Because of this, many experts believe wafer-scale engine news will not replace GPUs completely but will instead coexist with them as specialized systems. GPUs will likely continue to dominate general AI computing, while wafer-scale systems may serve as high-end solutions for large-scale models and scientific research.

Future Outlook

Looking ahead, the future of wafer-scale engine news depends on several key factors. Manufacturing improvements will determine whether production can become more reliable and cost-effective. Software development will be critical to ensure compatibility with existing AI frameworks. And finally, the continued growth of artificial intelligence itself will influence demand for high-performance computing systems.

As AI models continue to expand in size and complexity, the need for more efficient computing architectures will only increase.

Final Thoughts

In conclusion, wafer-scale engine news represents more than just a hardware innovation. It reflects a deeper shift in how the technology industry is thinking about computing architecture itself. By challenging the traditional model of cutting wafers into small chips, Cerebras Systems has introduced a radically different approach that pushes the limits of performance and design.

While the technology is still developing and faces real challenges, it has already influenced the direction of AI hardware research and sparked important conversations about the future of computing infrastructure.

Whether wafer-scale engine news eventually become mainstream or remain a specialized tool for advanced workloads, they have already earned their place in the ongoing evolution of technology—and continue to be a major topic in global AI hardware news.

FAQs

Q: What is a wafer-scale engine news?

A: A wafer-scale engine is a giant AI chip that uses an entire silicon wafer as one processor instead of cutting it into smaller chips. It is an important topic in wafer-scale engine news.

Q: Who created the wafer-scale engine technology?

A: The wafer-scale engine was developed by Cerebras Systems for high-performance AI computing and is widely featured in wafer-scale engine news.

Q: Why is wafer-scale engine news important?

A: Wafer-scale engine news is important because it highlights how this technology challenges traditional GPU systems and introduces a new approach to ultra-large AI computing hardware.

Q: How is a wafer-scale engine different from GPUs?

A: Unlike GPUs, it uses one massive chip with hundreds of thousands of cores, reducing the need for multiple connected devices, which is a key focus in wafer-scale engine news.

Q: What are the benefits of wafer-scale engine technology?

A: It offers faster processing, massive parallel computing power, and simplified AI infrastructure for large-scale machine learning tasks, making wafer-scale engine news highly relevant in the AI industry.